Human-in-the-Loop (HITL) Patterns in AWS Agentic AI Workflows

In 2026, the conversation around Generative AI has matured from “What can it do?” to “How do we govern what it does?” As enterprises move beyond simple Retrieval-Augmented Generation (RAG) into autonomous Agentic Systems, we are witnessing a fundamental shift in software architecture. We are no longer just building applications; we are building Digital Employees.

For regulated industries—Insurance, Financial Services, Life Sciences —autonomy without accountability is a non-starter. A “hallucinated” insurance payout or an unvalidated medical diagnosis isn’t just a bug; it’s a compliance catastrophe.

This is where Human-in-the-Loop (HITL) comes in. Gone are the days of the legacy Amazon A2I (Augmented AI) being the only tool in the shed. Today, we utilize sophisticated integrations between Amazon Bedrock AgentCore, AWS Step Functions, and frameworks like Strands Agents SDK to create systems that are 80% autonomous and 100% accountable.

Strategic Patterns for HITL ( Why is the human involved? )

Strategic patterns (often called Governance or Interaction patterns) deal with the high-level relationship between the human and the AI. They are concerned with fiduciary safety, risk management, and outcome quality.

1. Confirmation Gate Pattern

The agent is fully capable and confident but is legally or procedurally barred from executing the final action without a human “witness.”

Example (HR/Legal): An AI agent conducts an internal investigation into a policy violation. It gathers evidence, interviews stakeholders via email, and drafts a termination notice. Even if the agent is 100% sure, the “Confirmation Gate” ensures a Human HR Director clicks “Send” on that email. The agent is the pilot, and the human is the flight lead providing final clearance.

2. Uncertainty Escalation Pattern

The agent actively monitors its own confidence levels. If it encounters a scenario where its internal “certainty score” drops below a defined threshold (e.g., 80%), it pauses and asks for help.

Example (Customer Support): A support agent is handling a refund request. It understands “damaged product” (High Confidence) but fails to understand a complex, slang-heavy complaint about “regional shipping nuances” (Low Confidence). Instead of guessing and risking a brand PR disaster, it summarizes the situation for a human agent: “I understand the customer is upset about shipping, but I don’t understand the specific regional complaint. Can you clarify?”

3. Two-Person Rule (Dual Authorization) Pattern

For high-value transactions, the agent acts as the initiator, but the system requires two distinct human roles to approve the action.

Example (Finance): An agent identifies a late invoice and suggests a $50,000 wire transfer. The pattern forces a Finance Manager to approve the validity of the invoice and a CFO to authorize the actual movement of funds. The agent coordinates the “signatures” but never holds the “pen.”

4. Draft & Refine (Collaborative) Pattern

This is a “Human-Led, AI-Augmented” flow. The agent does the heavy lifting of synthesis, and the human provides the “creative finishing.”

Example (Software Engineering): A “Coding Agent” is tasked with migrating a legacy database. It creates a 20-step migration plan and drafts the SQL scripts. The human engineer doesn’t just “approve” it; they edit step 4 and regenerate step 12. The agent then adjusts the remaining 8 steps based on those human tweaks.

5. Safety Boundary (Policy Interception) Pattern

This pattern is “Passive” until a boundary is hit. The agent doesn’t “ask” for permission; it is stopped by an external policy engine.

Example (Cloud Ops): An agent is optimizing cloud costs and decides to shut down “unused” servers. It works fine for 10 servers, but when it attempts to shut down a server tagged #Production-Critical, an external Policy Guardrail intercepts the command. The agent receives a “Access Denied” error, and a human SRE is alerted to review why the agent attempted to touch a restricted resource.

Pattern | Best Use Case | Primary Goal |

Confirmation Gate | Regulated Workflows (Insurance, Legal) | Accountability |

Uncertainty Escalation | Complex Reasoning (Support, Research) | Accuracy |

Two-Person Rule | Fiduciary Tasks (Finance, Security) | Fraud Prevention |

Draft & Refine | Creative/Technical Work (Code, Copy) | Augmentation |

Safety Boundary | High-Stakes Infra (DevOps, Security) | Risk Mitigation |

Implementation Patterns for HITL ( How do we build HITL? )

Implementation patterns (also known as Architectural or Tactical patterns) are the technical mechanisms used to physically pause the AI and involve the human. These are not just “features” — they are architectural responses to fundamental challenges in agentic systems:

- Probabilistic reasoning (the model’s strength) must never cross into deterministic authority without explicit control.

- Human attention is scarce and expensive — we must minimize interruptions while maximizing value when humans are involved.

- Enterprise processes span seconds to days; the system must survive timeouts, restarts, and human unavailability without losing fidelity.

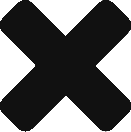

1. Active Handoff (Synchronous Return of Control)

The Active Handoff ensures that while the AI does the labor of reasoning, the human maintains the authority of execution.

The agentic system reaches a boundary, pauses its reasoning loop, packages its current intent and context as structured data (typically JSON), and hands control back to the calling application.

Prevents models from assuming authority over ambiguous, high-stakes, or interactive decisions. Instead of guessing missing data, overriding rules, or finalizing sensitive actions, the system creates a live bridge where reasoning stays probabilistic (model) and execution stays deterministic (application code + human).

Best For

Real-time collaboration, interactive refinement, routine confirmations (chat interfaces, draft reviews, parameter elicitation).

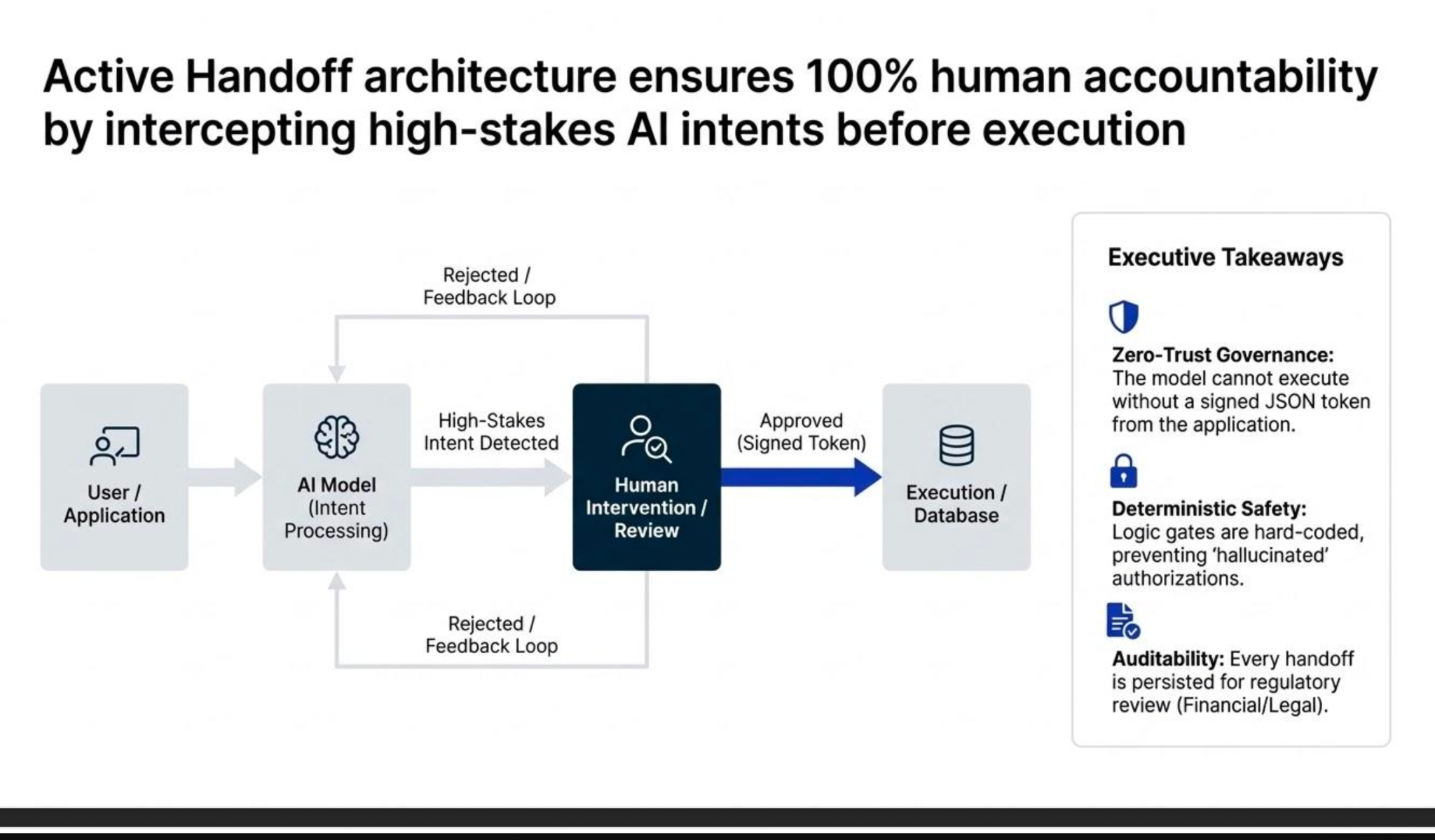

2. Durable Callback (Asynchronous Deep Freeze)

The workflow state is fully snapshotted into durable infrastructure. The agent enters a true paused/suspended state and waits for an external resume signal (e.g., API callback, task token, human-triggered event) — potentially spanning hours, days, or weeks.

To solve session fragility and human availability gaps. Real-time sessions are expensive and unreliable for asynchronous business processes (approvals during off-hours, multi-day reviews, weekend escalations).

This pattern moves the pause from volatile application memory to persistent, recoverable infrastructure.

Best For

Regulated approvals, executive gates, compliance workflows, long-running escalations.

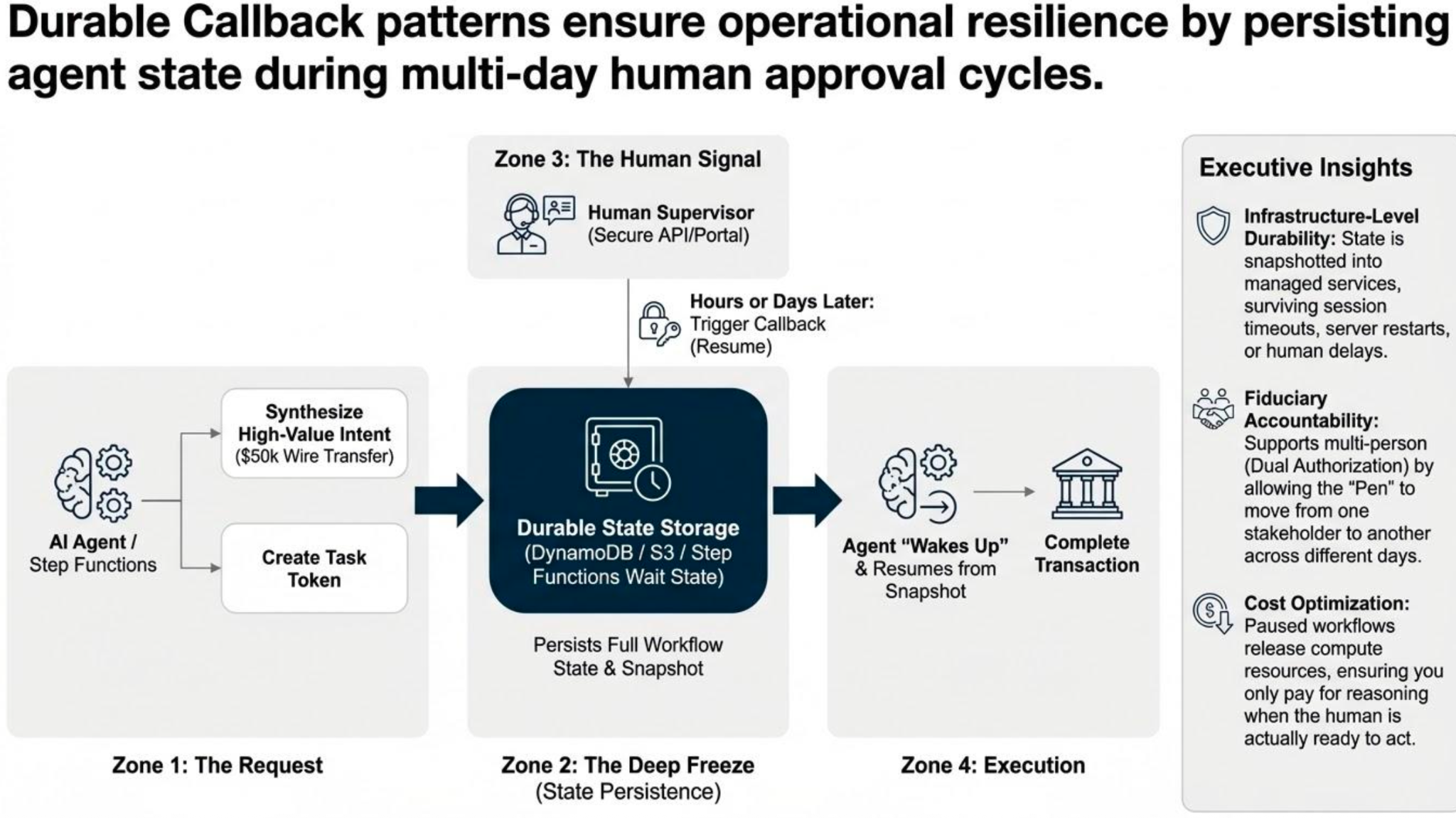

3. Live Takeover (Real-Time Visual Intervention)

For agents operating in visual or physical interfaces (browsers, GUIs, forms), the system streams a live view of the agent’s environment to a human supervisor. The human temporarily assumes full control (mouse, keyboard, input) to resolve blockers, then returns control to the agent.

To break edge-case deadlocks that pure reasoning cannot overcome (CAPTCHAs, broken layouts, MFA flows, dynamic UIs). Without takeover, agents fail, loop infinitely, or require full human re-execution — wasting automation value.

Best For

Browser automation, RPA-style tasks, web navigation, complex form-filling.

Quick Comparison Table

Pattern | State Durability | Primary Goal |

Active Handoff | Live session memory | Speed + accuracy in flow |

Durable Callback | Infrastructure | Accountability + auditability |

Live Takeover | Visual stream | Resilience in edge cases |

4. Hybrid Patterns & Graceful Escalation

In a production environment, these patterns rarely exist in isolation. The most resilient systems in 2026 utilize Hybrid Patterns, where a passive guardrail serves as the trigger for an active human intervention.

Think of this as Graceful Escalation. Instead of the agent simply “failing” with a permission error, it uses the failure as a signal to bring in a human.

Example: From “Access Denied” to “Request Override”

Imagine a Cloud Ops agent attempting to reboot a server.

- The Safety Boundary (Passive): An external policy engine (like Cedar) detects the server is tagged #Production-Critical and blocks the reboot command.

- The Trigger: Rather than giving up, the agent receives the “Access Denied” signal and recognizes it has hit a hard limit.

- The Active Handoff (ROC): The agent automatically triggers an Active Handoff (Return of Control). It presents a UI to the Site Reliability Engineer (SRE) that says: “I attempted to reboot this server for optimization, but it is marked as critical. Do you wish to grant a one-time override for this action?”

Closing thoughts: From Autonomy to Orchestration

The goal of implementing these patterns isn’t to create an agent that works for us, but one that works with us. In the race to automate, it is tempting to view Human-in-the-Loop as a bottleneck—a friction point that slows down the machine. But as we’ve seen, HITL is actually the enabler of scale. By building robust bridges between probabilistic reasoning and deterministic execution, we aren’t just preventing errors; we are building the infrastructure of trust.

We are no longer just coding applications; we are choreographing a new era of collaborative intelligence.